Let’s talk about the ethical use of AI in clinical supervision, within a cultural, legal and clinical framework.

Let’s talk about the ethical use of AI in clinical supervision, within a cultural, legal and clinical framework.

Artificial intelligence is already part of the supervision landscape.

Supervisees are using AI to draft case summaries.

Clients are consulting AI for mental health advice.

Practitioners are experimenting with AI-assisted documentation and treatment planning.

The question is no longer whether AI will enter clinical supervision.

The question is how we respond.

If we treat AI as a harmless shortcut, we risk ethical drift.

If we treat it as a threat, we miss an opportunity for thoughtful integration.

What we need instead is a grounded, ethical, culturally aware response.

AI Is Not Just a Tool — It Is a Cultural Force

Technology is never neutral. It shapes how we think, how we relate, and how we assign authority.

AI is not simply software. It participates in meaning-making. It influences language. It mirrors dominant cultural narratives. It creates the illusion of objectivity.

When a supervisee consults AI before bringing a case to supervision, authority has shifted. Reflection has been mediated. Clinical thinking has already been influenced by an algorithm.

That doesn’t make AI unethical.

But it does mean supervision must evolve.

Supervisors must now ask:

-

Are you using AI in your clinical workflow?

-

For what purposes?

-

With what safeguards?

-

With what awareness of bias?

-

With what understanding of privacy risk?

These questions are not punitive. They are protective.

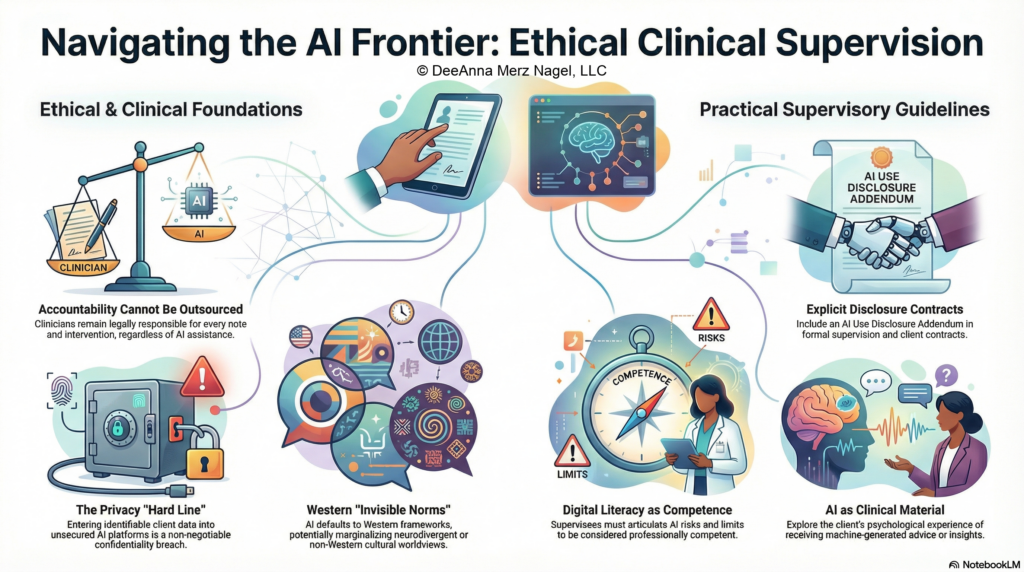

Ethical and Legal Responsibility Does Not Transfer to a Machine

No matter how polished the output, AI does not carry professional accountability.

It cannot:

-

Assess risk in real time

-

Evaluate tone, silence, or embodied distress

-

Detect dissociation through micro-expression

-

Hold trauma in the relational field

And it certainly cannot be subpoenaed.

The clinician remains legally and ethically responsible for every note, every formulation, every intervention.

Supervision must reinforce this: AI may assist, but it may not replace clinical judgment.

Entering identifiable client information into public or unsecured AI platforms constitutes a potential breach of confidentiality. This is not a gray area.

Digital literacy is now part of professional competence.

Projection and the Illusion of Authority

There is also a psychological layer.

Clients may experience AI as:

-

A neutral authority

-

A safer confessor

-

A more objective diagnostician

Practitioners may experience AI as:

-

Efficient

-

Unbiased

-

Smarter

-

Faster

But AI is trained on large datasets shaped by cultural norms, systemic bias, and dominant frameworks. It reflects the assumptions embedded in those datasets.

When clients bring AI-generated insights into therapy, that material becomes clinically meaningful.

We might explore:

-

What did it feel like to receive advice from a machine?

-

What authority did you give it?

-

Did it validate you or invalidate you?

-

Did it simplify something complex?

Rather than dismissing AI use, supervision can help clinicians process it as part of the client’s lived experience.

AI is now part of the narrative ecology.

Cultural Considerations: Bias, Power, and the “Invisible Norm”

AI systems tend to default to mainstream diagnostic language and Western conceptual frameworks. This can unintentionally marginalize:

-

Neurodivergent expressions

-

Non-Western spiritual worldviews

-

Community-based healing traditions

-

Trauma expressions that do not fit tidy categories

Supervision must encourage critical thinking:

-

Whose worldview is reflected in this output?

-

Does this formulation align with the client’s cultural context?

-

What might be missing?

Cultural humility does not disappear in digital spaces. It becomes more essential.

Gatekeeping in the Age of AI

Supervisors have a gatekeeping role. That now includes digital competence.

If a supervisee cannot articulate:

-

The risks of AI use

-

The limits of algorithmic output

-

The difference between brainstorming and decision-making

-

The privacy implications of various platforms

…that is a competence issue.

This is not about prohibiting AI.

It is about ensuring that supervisees remain reflective, accountable clinicians — not passive consumers of machine-generated language.

Practical Guidelines for Supervisors

A thoughtful supervisory response may include:

-

Explicit discussion of AI use in supervision contracts

-

Clear prohibition of entering identifiable client data into unsecured platforms

-

Reviewing AI-assisted notes critically during supervision

-

Exploring client use of AI as clinical material

-

Reinforcing that AI output must always be evaluated, contextualized, and refined

Supervision should not become a technology policing space.

It should remain what it has always been: a reflective, relational, ethical space.

AI simply adds a new dimension to that reflection.

The Larger Professional Shift

Every technological shift in mental health has raised questions about legitimacy, safety, and ethics. Over time, the profession has adapted — not by abandoning its principles, but by applying them more carefully.

AI does not require a new ethical foundation.

It requires a deeper application of the one we already have.

Confidentiality still matters.

Competence still matters.

Cultural humility still matters.

Relational accountability still matters.

Clinical supervision is where we learn to navigate new terrain without losing our ethical center.

AI is here.

Our task is not to fear it.

Our task is to remain human while we engage it.

The Clinical Supervision Series course (Part 1) offers a sample Artificial Intelligence (AI) Use Disclosure Addendum that can be added to the formal contract (a legal review of contracts is highly recommended).

INFOGRAPH

Seeking Clinical Supervision training?

References

Nagel, D. M. (2026, January 7). AI, projection, and the psychology of meaning. DeeAnnaMerzNagel.com. https://deeannamerznagel.com/ai-projection-and-the-psychology-of-meaning/

Nagel, D.M. & Anthony, K., with Louw, G. (2012). Cyberspace as Culture: A New Paradigm for Therapists and Coaches. Therapeutic Innovations in Light of Technology. Volume 2, Issue 4: 24-36

Spooner, J. (2025, March 2). The ethics of ChatGPT and AI in mental health. SimplePractice. https://www.simplepractice.com/blog/ethics-of-artificial-intelligence-in-mental-health/

Stretch, L., Nagel, D.M. & Anthony, K. (2016). An updated ethical framework for the use of technology in supervision. In S.Goss, K. Anthony,133- L. Stretch & D.M. Nagel (Eds.), Technology in mental health: applications in practice, supervision and training (pp. 303-309), Charles C. Thomas Publisher: Springfield, IL.

Stretch, L.S., Nagel, D.M & Anthony, K (2013). Ethical Framework for the Use of Technology in Supervision. Therapeutic Innovations in Light of Technology. Volume 3, Issue 2: 39-45